|

5/8/2023 0 Comments Parallel computing

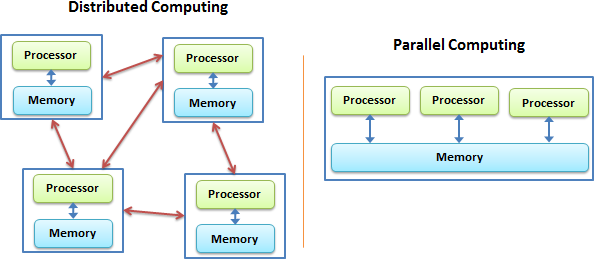

Similar to the physical world, there are logistical limits on how many parallel units (people) can use the memory (pin board) efficiently and how big it can be. There is one shared memory (pin-board in the analogy) where everybody can see what everybody is doing and how far they have gotten or which results (the bathroom is already clean) they got. Shared Memory programming works like the communication of multiple people, who are cleaning a house, via a pin board. Information on how to start/run/use an exisiting parallel code can be found in the OpenMP or MPI article. Auto-parallelization (compiler-introduced threading)įollowing is a short description of the basic concept of Shared Memory Systems and Distributed Memory Systems.OpenMP (not to be mixed with Open MPI wich is an MPI implementation).accelerators and other devices, like GPGPU - latest OpenMP standards introduce offloading.In the context of HPC those well-known approaches can be itemised (the list is not final!): Through unremittingly development some approaches previously known to be DM get features related to SM, and on the other side attemps are made to make SM approaches be runnable over DM clusters, making a clean dichotomy complicated up to impossible in many cases. Note that a Distributed Memory approach typically can be used also on a Shared Memory system. However the used network is crucial for the DM performance and scalability. The Distributed Memory paradigms owns the ultimate feature to work beyond frontiers of a physical node / operating system instance, opening the possibility to utilize much more of (potentially cheaper) hardware. Serial parts in the program which may not be parallelized at all Amdahl's Law.Īll of the parallelization approaches can be coarsely classified to be of Distributed Memory (DM) or Shared Memory (SM) class.Load imbalances one processor has more work than the others causing them to wait.Bottlenecks in the parallel computer design, e.g.Overhead because of synchronization and communication.There are several reasons why the ideal case usually is not met, a few of them are: In the ideal case doubling the number of execution units the runtime is cut in half. There are very many kinds of parallelization. In order to solve a problem faster, or to compute larger data sets as otherwise possible, work can often be dissected in pieces and executed in parallel, as mentioned in Getting Started.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed